Five Democratic pollsters released a public letter yesterday about polling challenges in the 2020 election. The five pollsters – which you likely have never heard of before (unless you have paid very close attention to my posts) – are ALG Research (B/C rating from FiveThirtyEight), Garin-Hart-Yang Research Group (B-), GBAO Strategies (B/C), Global Strategy Group (B/C), and Normington Petts (B/C). I will be honest; I have never heard of Normington Petts either. ALG, however, was Biden’s pollster during the election. One reason you probably have not heard of these pollsters is because they work for Democratic candidates and – with some exceptions – do not publicly release results. That is, they mostly conduct internal campaign polls.

There is nothing new in this letter, except that these conclusions are now supported by the voter files rather than mere hypotheses. We have heard these explanations before – particularly in the IOP polling conference I reported on a few weeks ago. At that time, some of the pollsters at that conference had likely seen some voter files already. But the reasons for the errors in the polling were not difficult to guess. What will be difficult is doing something about them.

There are two types of non-probability errors that could contribute to polling errors of the sort we saw in 2020. The first type consists of errors in building a turnout model. The second is any of a number of measurement errors.

Turnout Error

One problem here is that the pollsters assert that they need to build complex turnout models because asking voters whether they will vote or not produces its own errors. It is assumed that voters do not want to admit that they will not vote. I do not find that convincing. I think the error in asking has more to do with someone failing to vote rather than lying about not planning to vote. They are not the same thing. Nevertheless, just one pollster got the Iowa results right and she adamantly refuses to build voter turnout models. Ann Selzer does two things in her polls differently than others generally do: she keeps interviews brief and she asks voters whether they will vote and she believes them. It is possible this method works in Iowa but not in other places (although Selzer has had success in other midwestern states).

“Now that we have had time to review the voter files from 2020, we found our models consistently overestimated Democratic turnout relative to Republican turnout in a specific way. Among low propensity voters—people who we expect to vote rarely—the Republican share of the electorate exceeded expectations at four times the rate of the Democratic share. This turnout error meant, at least in some places, we again underestimated relative turnout among rural and white non-college voters, who are overrepresented among low propensity Republicans.” (Democratic Pollster Letter, emphasis in original.) However significant this may seem, the pollsters have concluded that it made little difference – except possibly in the presidential race. Generally, the pollsters found that their turnout models were fairly accurate.

Measurement Error

A measurement error in polling refers to (typically) non-probability errors made by interviewers, survey designers, or analysts. This can happen from poor survey design, confusing interview questionnaire, or assumptions about demographic groups that are incorrect.

Late Movement

In 2016 we saw a high number of voters expressing displeasure with both major party candidates very late in the campaign. In the end, most of these voters broke for Trump in the final days of the campaign. One reason that pollsters missed this is because it is not something polling can catch unless, maybe, they continue to poll each day at the end of the campaign. Another reason, which pollsters – or at least their communications staff – can address more easily, is ignoring undecided voters (often captured as “other” or another designation). This is an important reason why we see big margins in some polls when the top candidate has less than or just about 50% support. Biden’s chief pollster made it clear in the recent IOP polling conference that “Democrats get what they poll” and margins are unimportant. In this sense, national polling was generally spot-on in estimating Biden’s support. Where they failed was in not explaining how much closer the election really was since the double-digit margins were only explicable by the presence of either undecided or unlikely voters. (In 2016, minor party candidates polled and received a relatively significant share of the vote. That did not happen in 2020.)

The five pollsters here conclude that the polls were stable, and this factor did not contribute to the polling errors. However, if you think the polls were wrong in 2020 because of the margins – that is, because Biden won by 4.5 points instead of ten – then late movement is likely to blame for that. However, this is not actually a pollster miss. It is an analytical and communication failure, mostly on the part of media outlets reporting on the polls.

COVID Error

One popular hypothesis among pollsters prior to the analysis of the voter files is that more Democratic supporters responded to surveys than Republicans because the former were more likely to respect stay at home orders and other restrictions related to the COVID-19 pandemic. Even Trump’s pollster claimed this was a phenomenon that he observed. The pollsters make no conclusions about the impact of this since there is likely no way to know for sure without surveying voters and asking them directly (and then there still may be measurement errors associated with those surveys).

Social Trust

This has appeared to be the consensus pick for the more significant impact on polling error in 2020. However, there is just no data on this right now – it’s almost entirely anecdotal. But it seems like a plausible explanation, and the Democratic pollsters agree (so does Pew, see below). While there is little evidence that survey respondents purposely lie to pollsters, there is reason to believe that there is a partisan division in trusting American institutions that make it more difficult to reach Republicans than Democrats. This phenomenon reinforces the COVID-19 Error and possibly led to a large measurement error – or the errors could have been overlapping.

The Democratic pollsters agree that all of these things likely played some role in polling error in 2020, but conclude that “While there is evidence some of these theories played a part, no consensus on a solution has emerged. What we have settled on is the idea there is something systematically different about the people we reached, and the people we did not. This problem appears to have been amplified when Trump was on the ballot, and it is these particular voters who Trump activated that did not participate in polls.” (Democratic Pollster Letter, emphasis in original).

Pew and Challenges to Panel Surveys

This letter has overshadowed a report from Pew Research earlier this month. Pew uses panels, so the challenges it faces are different from the ones facing the Democratic pollsters. Pew’s report analyzed whether its American Trends Panel (ATP) “is in any way underrepresenting Republicans, either by recruiting too few into the panel or by losing Republicans at a higher rate.” Pew recruits participants for ATP offline from sampling residential addresses.

There were three main findings from the Pew study (and remember, this only relates to their methodology):

Adults joining the ATP in recent years are less Republican than those joining in earlier years.

Trump voters were somewhat more likely than others to leave the panel (stop taking surveys) since 2016, though this is explained by their demographics.

People living in the country’s most and least pro-Trump areas were somewhat less likely than others to join the panel in 2020.

The conclusion Pew has reached is that these issues are related to the problem of social trust. Republicans are doing a better job of attracting low-trust Americans and - because these potential voters have a distrust of institutions, including polling - that helps explain why Pew has seen a decrease in Republican participation on its ATP.

A Trump Effect?

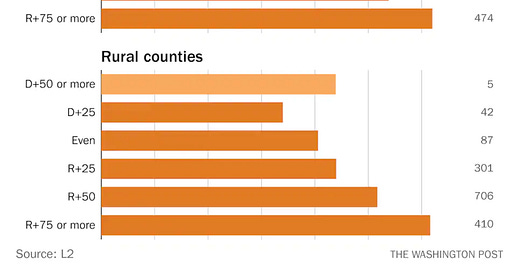

The Democratic pollsters believe that Trump’s presence on the ballot may have been a unique factor in the turnout problem. The Washington Post’s Phillip Bump seems to agree. Bump finds that there were more “redder” counties had more voters skipping the mid-terms than voted in 2016 and 2020. This provides some support for the idea that Trump on the ballot provides a unique challenge for pollsters. However, if this is true it is also a challenge for the GOP in winning future elections, mid-terms and presidentials.

According to Bump, “[t]his doesn’t prove that Trump was dependent on voters who only came out to vote for him. All it does is provide one more bit of data suggesting that it might be the case that his candidacy had an unusual effect, particularly in more rural areas. (There’s a correlation between population density and college education. In rural counties, an average of 20 percent of White adults have degrees; in large urban counties, the average is 45 percent.)”

In a subscriber briefing this morning, CPR’s Amy Walter said that the polling problem is simply about pollsters not finding new Trump voters. “Is this going to be as important … when Trump is no longer on the ballot?” In 2018, there were “no big [polling] surprises,” suggesting that the absence of Trump on the ballot mitigated the error. Another supporting observation is that the polling on the Democratic number has been accurate, but the Republican number has been harder to estimate. Walter thinks the issue is that Trump overperforms the polls; the Democrat has not underperformed (whether Clinton or Biden). Like some of the Democratic pollsters, Walter thinks we should stop paying so much attention to polling margins.

Walter may be correct about that when it comes to presidential elections, but Pew reports that “Looking at final estimates of the outcome of the 2020 U.S. presidential race, 93% of national polls overstated the Democratic candidate’s support among voters, while nearly as many (88%) did so in 2016.” So, the problem might be more complex. As with so much of political polling and prognostication, the sample size of elections are so small it’s hard to predict how one phenomenon or another will play out in the future - even if that future is next year’s mid-terms.

Both Pew and the Democratic pollsters have some ideas for addressing the challenges they have articulated, but I am holding off on discussing those until the American Association for Public Opinion Research (AAPOR) releases its comprehensive, industry-wide analysis of the 2020 polls. That report is expected shortly.

———————

Program Notes

I apologize for not writing more recently. I have been working on some interesting new ideas for the blog, as well as an exciting partnership that is still in the works (so I can’t say anything about it right now). Please follow me on Twitter for more frequent posts. I am planning to create a new Facebook page specifically for MOE. When that happens I will announce it here.

Look for a new series: “Census, Apportionment, and Redistricting.” An introductory post should be up by the end of this week. Also, in the next few weeks I will be circling back on another series I began before the 2020 campaign got into full swing last year. That series is about the institutionalization of the two-party system and the challenges that presents for minor parties and independent candidates working with major parties.

Finally, we heard this morning that the Cook Political Report will release an update of the Partisan Voter Index (PVI) tomorrow. The PVI was first created in 2017 by CPR to give readers a sense of how much more Republican or Democratic a Congressional district votes than the country as a whole does. It is definitely worth checking out.